Events on January 26, 2022

Liz Marai, University of Illinois Presents:

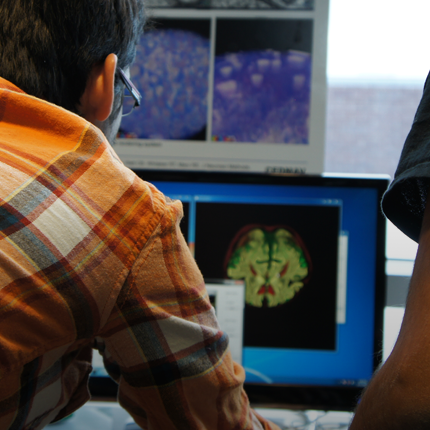

What is a "good" visual explanation of AI?

January 26, 2022 at 12:00pm for 1hr

https://utah.zoom.us/j/99318527933 Password: sci_vis

Abstract:

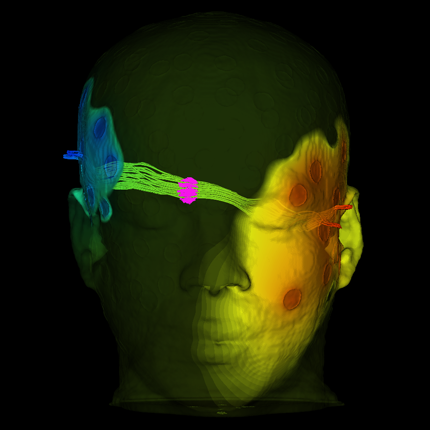

Healthcare is one of the oldest consumers of artificial intelligence methods, since the early 1970s. Still, today, only about 18% of healthcare decisions are made using rule-based systems, a significantly smaller percentage (2%) use ML systems, and the majority of decisions are still based on heuristics. Data visualization can play important roles as a facilitator and enabler of AI/ML adoption. In this talk, I will explore what visual explainability in AI means, beyond the interpretability of deep learner models, and why it matters. I will then focus on the explainability of spatial clustering models to clinical audiences, based on a multi-year collaboration among visual computing, radiation oncologists, and data mining experts. Last, I will reflect on the lessons learned through the successful design, deployment, and dissemination of explanatory visual encodings to clinical audiences, with particular emphasis on Transparency, Domain sense, Actionability, and Generalizability.

Bio: Liz Marai is an associate professor of Computer Science at the University of Illinois at Chicago. Her research interests go from visual-system related problems that can be robustly solved through automation, to problems that require human experts in the computational loop, and the principles behind this work. Marai's research has been recognized by multiple prestigious awards, including: a Test of Time award and Outstanding Paper awards, along with her students; an NSF CAREER Award and multiple NSF awards; and several multi-site NIH R01 awards as a lead investigator. She has co-authored scientific open-source software adopted by thousands of users, and she is a patent co-author, whose algorithms have been embedded in a medical device.

Posted by: Nathan Galli