Scientific Computing

Numerical simulation of real-world phenomena provides fertile ground for building interdisciplinary relationships. The SCI Institute has a long tradition of building these relationships in a win-win fashion – a win for the theoretical and algorithmic development of numerical modeling and simulation techniques and a win for the discipline-specific science of interest. High-order and adaptive methods, uncertainty quantification, complexity analysis, and parallelization are just some of the topics being investigated by SCI faculty. These areas of computing are being applied to a wide variety of engineering applications ranging from fluid mechanics and solid mechanics to bioelectricity.

Valerio Pascucci

Scientific Data Management

Chris Johnson

Problem Solving Environments

Ross Whitaker

GPUs

Chuck Hansen

GPUsFunded Research Projects:

Publications in Scientific Computing:

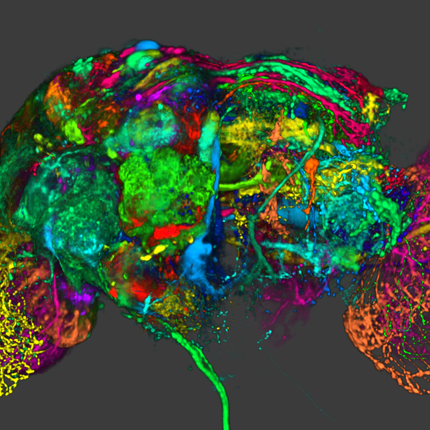

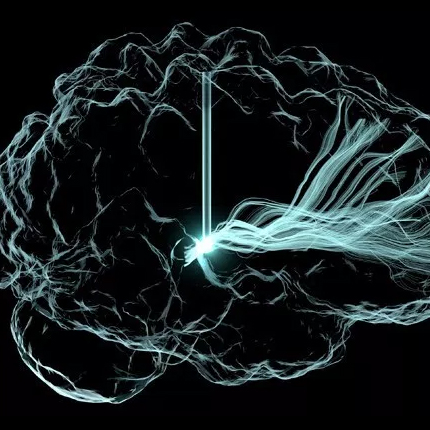

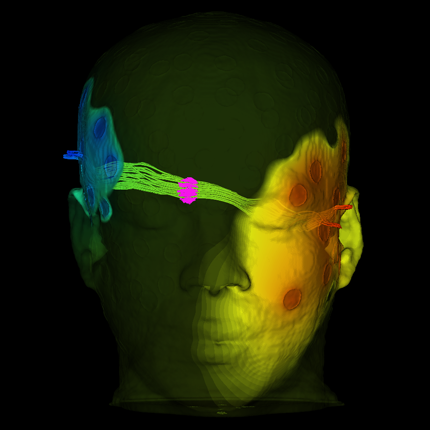

Grand Challenges at the Interface of Engineering and Medicine S. Subramaniam, M. Miller, several co-authors, Chris R. Johnson, et al.. In IEEE Open Journal of Engineering in Medicine and Biology, Vol. 5, IEEE, pp. 1--13. 2024. DOI: 10.1109/OJEMB.2024.3351717 Over the past two decades Biomedical Engineering has emerged as a major discipline that bridges societal needs of human health care with the development of novel technologies. Every medical institution is now equipped at varying degrees of sophistication with the ability to monitor human health in both non-invasive and invasive modes. The multiple scales at which human physiology can be interrogated provide a profound perspective on health and disease. We are at the nexus of creating “avatars” (herein defined as an extension of “digital twins”) of human patho/physiology to serve as paradigms for interrogation and potential intervention. Motivated by the emergence of these new capabilities, the IEEE Engineering in Medicine and Biology Society, the Departments of Biomedical Engineering at Johns Hopkins University and Bioengineering at University of California at San Diego sponsored an interdisciplinary workshop to define the grand challenges that face biomedical engineering and the mechanisms to address these challenges. The Workshop identified five grand challenges with cross-cutting themes and provided a roadmap for new technologies, identified new training needs, and defined the types of interdisciplinary teams needed for addressing these challenges. The themes presented in this paper include: 1) accumedicine through creation of avatars of cells, tissues, organs and whole human; 2) development of smart and responsive devices for human function augmentation; 3) exocortical technologies to understand brain function and treat neuropathologies; 4) the development of approaches to harness the human immune system for health and wellness; and 5) new strategies to engineer genomes and cells. |

Accelerating Data-Intensive Seismic Research Through Parallel Workflow Optimization and Federated Cyberinfrastructure M. Adair, I. Rodero, M. Parashar, D. Melgar. In Proceedings of the SC '23 Workshops of The International Conference on High Performance Computing, Network, Storage, and Analysis, ACM, pp. 1970--1977. 2023. DOI: 10.1145/3624062.3624276 Earthquake early warning systems use synthetic data from simulation frameworks like MudPy to train models for predicting the magnitudes of large earthquakes. MudPy, although powerful, has limitations: a lengthy simulation time to generate the required data, lack of user-friendliness, and no platform for discovering and sharing its data. We introduce FakeQuakes DAGMan Workflow (FDW), which utilizes Open Science Grid (OSG) for parallel computations to accelerate and streamline MudPy simulations. FDW significantly reduces runtime and increases throughput compared to a single-machine setup. Using FDW, we also explore partitioned parallel HTCondor DAGMan workflows to enhance OSG efficiency. Additionally, we investigate leveraging cyberinfrastructure, such as Virtual Data Collaboratory (VDC), for enhancing MudPy and OSG. Specifically, we simulate using Cloud bursting policies to enforce FDW job-offloading to VDC during OSG peak demand, addressing shared resource issues and user goals; we also discuss VDC’s value in facilitating a platform for broad access to MudPy products. |

Strengthening and Democratizing Artificial Intelligence Research and Development M. Parashar, T. deBlanc-Knowles, E. Gianchandani, L.E. Parker. In Computer, Vol. 56, No. 11, IEEE, pp. 85-90. 2023. DOI: 10.1109/MC.2023.3284568 This article summarizes the vision, roadmap, and implementation plan for a National Artificial Intelligence Research Resource that aims to provide a widely accessible cyberinfrastructure for artificial intelligence R&D, with the overarching goal of bridging the resource–access divide. |

HiPPIS A High-Order Positivity-Preserving Mapping Software for Structured Meshes T. A. J. Ouermi, R. M Kirby, M. Berzins. In ACM Trans. Math. Softw, ACM, Nov, 2023. ISSN: 0098-3500 DOI: 10.1145/3632291 Polynomial interpolation is an important component of many computational problems. In several of these computational problems, failure to preserve positivity when using polynomials to approximate or map data values between meshes can lead to negative unphysical quantities. Currently, most polynomial-based methods for enforcing positivity are based on splines and polynomial rescaling. The spline-based approaches build interpolants that are positive over the intervals in which they are defined and may require solving a minimization problem and/or system of equations. The linear polynomial rescaling methods allow for high-degree polynomials but enforce positivity only at limited locations (e.g., quadrature nodes). This work introduces open-source software (HiPPIS) for high-order data-bounded interpolation (DBI) and positivity-preserving interpolation (PPI) that addresses the limitations of both the spline and polynomial rescaling methods. HiPPIS is suitable for approximating and mapping physical quantities such as mass, density, and concentration between meshes while preserving positivity. This work provides Fortran and Matlab implementations of the DBI and PPI methods, presents an analysis of the mapping error in the context of PDEs, and uses several 1D and 2D numerical examples to demonstrate the benefits and limitations of HiPPIS. |

Toward Democratizing Access to Science Data: Introducing the National Data Platform, M. Parashar, I. Altintas. In IEEE 19th International Conference on e-Science, IEEE, 2023. DOI: 10.1109/e-Science58273.2023.10254930 Open and equitable access to scientific data is essential to addressing important scientific and societal grand challenges, and to research enterprise more broadly. This paper discusses the importance and urgency of open and equitable data access, and explores the barriers and challenges to such access. It then introduces the vision and architecture of the National Data Platform, a recently launched project aimed at catalyzing an open, equitable and extensible data ecosystem. |

Dynamic Data-Driven Application Systems for Reservoir Simulation-Based Optimization: Lessons Learned and Future Trends, M. Parashar, T. Kurc, H. Klie, M.F. Wheeler, J.H. Saltz, M. Jammoul, R. Dong. In Handbook of Dynamic Data Driven Applications Systems: Volume 2, Springer International Publishing, pp. 287--330. 2023. DOI: 10.1007/978-3-031-27986-7_11 Since its introduction in the early 2000s, the Dynamic Data-Driven Applications Systems (DDDAS) paradigm has served as a powerful concept for continuously improving the quality of both models and data embedded in complex dynamical systems. The DDDAS unifying concept enables capabilities to integrate multiple sources and scales of data, mathematical and statistical algorithms, advanced software infrastructures, and diverse applications into a dynamic feedback loop. DDDAS has not only motivated notable scientific and engineering advances on multiple fronts, but it has been also invigorated by the latest technological achievements in artificial intelligence, cloud computing, augmented reality, robotics, edge computing, Internet of Things (IoT), and Big Data. Capabilities to handle more data in a much faster and smarter fashion is paving the road for expanding automation capabilities. The purpose of this chapter is to review the fundamental components that have shaped reservoir-simulation-based optimization in the context of DDDAS. The foundations of each component will be systematically reviewed, followed by a discussion on current and future trends oriented to highlight the outstanding challenges and opportunities of reservoir management problems under the DDDAS paradigm. Moreover, this chapter should be viewed as providing pathways for establishing a synergy between renewable energy and oil and gas industry with the advent of the DDDAS method. |

TEMA: Event Driven Serverless Workflows Platform for Natural Disaster Management C. Sicari, A. Catalfamo, L. Carnevale, A. Galletta, D. Balouek-Thomert, M. Parashar, M. Villari. In 2023 IEEE Symposium on Computers and Communications (ISCC), pp. 1-6. 2023. DOI: 10.1109/ISCC58397.2023.10217920 TEMA project is a Horizon Europe funded project that aims at addressing Natural Disaster Management by the use of sophisticated Cloud-Edge Continuum infrastructures by means of data analysis algorithms wrapped in Serverless functions deployed on a distributed infrastructure according to a Federated Learning scheduler that constantly monitors the infrastructure in search of the best way to satisfy required QoS constraints. In this paper, we discuss the advantages of Serverless workflow and how they can be used and monitored to natively trigger complex algorithm pipelines in the continuum, dynamically placing and relocating them taking into account incoming IoT data, QoS constraints, and the current status of the continuum infrastructure. Therefore we presented the Urgent Function Enabler (UFE) platform, a fully distributed architecture able to define, spread, and manage FaaS functions, using local IOT data managed using the Fiware ecosystem and a computing infrastructure composed of mobile and stable nodes. |