Scientific Computing

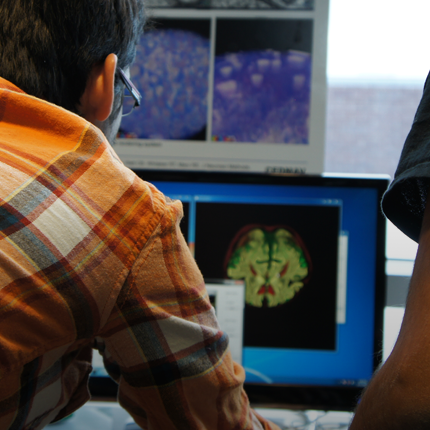

Numerical simulation of real-world phenomena provides fertile ground for building interdisciplinary relationships. The SCI Institute has a long tradition of building these relationships in a win-win fashion – a win for the theoretical and algorithmic development of numerical modeling and simulation techniques and a win for the discipline-specific science of interest. High-order and adaptive methods, uncertainty quantification, complexity analysis, and parallelization are just some of the topics being investigated by SCI faculty. These areas of computing are being applied to a wide variety of engineering applications ranging from fluid mechanics and solid mechanics to bioelectricity.

Valerio Pascucci

Scientific Data Management

Chris Johnson

Problem Solving Environments

Ross Whitaker

GPUs

Chuck Hansen

GPUsFunded Research Projects:

Publications in Scientific Computing:

Visual Exploratory Analysis for Designing Large-Scale Network-on-Chip Architectures: A Domain Expert-Led Design Study, S. Wang, H. Yan, K.E. Isaacs, Y. Sun. In IEEE Transactions on Visualization and Computer Graphics, Vol. 30, pp. 1970-1983. 2024. Visualization design studies bring together visualization researchers and domain experts to address yet unsolved data analysis challenges stemming from the needs of the domain experts. Typically, the visualization researchers lead the design study process and implementation of any visualization solutions. This setup leverages the visualization researchers' knowledge of methodology, design, and programming, but the availability to synchronize with the domain experts can hamper the design process. We consider an alternative setup where the domain experts take the lead in the design study, supported by the visualization experts. In this study, the domain experts are computer architecture experts who simulate and analyze novel computer chip designs. These chips rely on a Network-on-Chip (NOC) to connect components. The experts want to understand how the chip designs perform and what in the design led to their performance. To aid this analysis, we develop Vis4Mesh, a visualization system that provides spatial, temporal, and architectural context to simulated NOC behavior. Integration with an existing computer architecture visualization tool enables architects to perform deep-dives into specific architecture component behavior. We validate Vis4Mesh through a case study and a user study with computer architecture researchers. We reflect on our design and process, discussing advantages, disadvantages, and guidance for engaging in a domain expert-led design studies. |

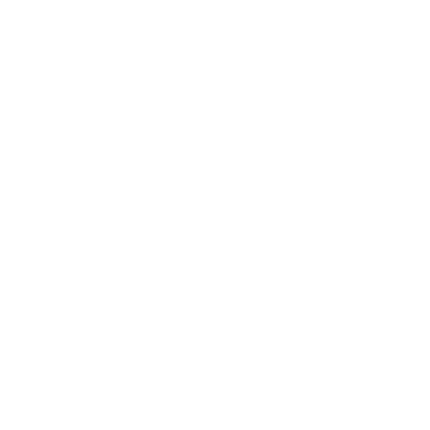

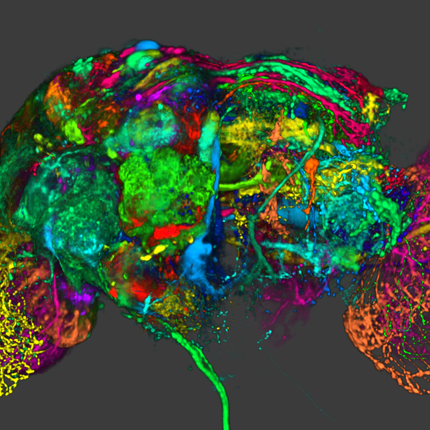

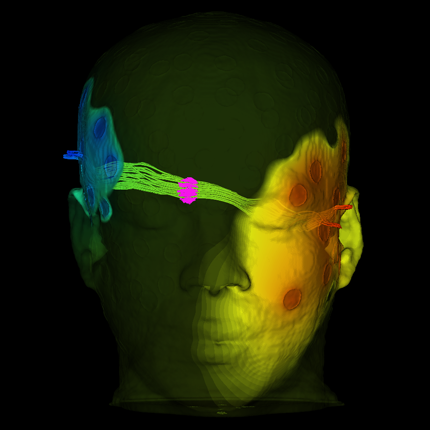

Grand Challenges at the Interface of Engineering and Medicine S. Subramaniam, M. Miller, several co-authors, Chris R. Johnson, et al.. In IEEE Open Journal of Engineering in Medicine and Biology, Vol. 5, IEEE, pp. 1--13. 2024. DOI: 10.1109/OJEMB.2024.3351717 Over the past two decades Biomedical Engineering has emerged as a major discipline that bridges societal needs of human health care with the development of novel technologies. Every medical institution is now equipped at varying degrees of sophistication with the ability to monitor human health in both non-invasive and invasive modes. The multiple scales at which human physiology can be interrogated provide a profound perspective on health and disease. We are at the nexus of creating “avatars” (herein defined as an extension of “digital twins”) of human patho/physiology to serve as paradigms for interrogation and potential intervention. Motivated by the emergence of these new capabilities, the IEEE Engineering in Medicine and Biology Society, the Departments of Biomedical Engineering at Johns Hopkins University and Bioengineering at University of California at San Diego sponsored an interdisciplinary workshop to define the grand challenges that face biomedical engineering and the mechanisms to address these challenges. The Workshop identified five grand challenges with cross-cutting themes and provided a roadmap for new technologies, identified new training needs, and defined the types of interdisciplinary teams needed for addressing these challenges. The themes presented in this paper include: 1) accumedicine through creation of avatars of cells, tissues, organs and whole human; 2) development of smart and responsive devices for human function augmentation; 3) exocortical technologies to understand brain function and treat neuropathologies; 4) the development of approaches to harness the human immune system for health and wellness; and 5) new strategies to engineer genomes and cells. |