SCI Publications

2024

M. Han, J. Li, S. Sane, S. Gupta, B. Wang, S. Petruzza, C.R. Johnson.

“Interactive Visualization of Time-Varying Flow Fields Using Particle Tracing Neural Networks,” Subtitled “arXiv preprint arXiv:2312.14973,” 2024.

Lagrangian representations of flow fields have gained prominence for enabling fast, accurate analysis and exploration of time-varying flow behaviors. In this paper, we present a comprehensive evaluation to establish a robust and efficient framework for Lagrangian-based particle tracing using deep neural networks (DNNs). Han et al. (2021) first proposed a DNN-based approach to learn Lagrangian representations and demonstrated accurate particle tracing for an analytic 2D flow field. In this paper, we extend and build upon this prior work in significant ways. First, we evaluate the performance of DNN models to accurately trace particles in various settings, including 2D and 3D time-varying flow fields, flow fields from multiple applications, flow fields with varying complexity, as well as structured and unstructured input data. Second, we conduct an empirical study to inform best practices with respect to particle tracing model architectures, activation functions, and training data structures. Third, we conduct a comparative evaluation of prior techniques that employ flow maps as input for exploratory flow visualization. Specifically, we compare our extended model against its predecessor by Han et al. (2021), as well as the conventional approach that uses triangulation and Barycentric coordinate interpolation. Finally, we consider the integration and adaptation of our particle tracing model with different viewers. We provide an interactive web-based visualization interface by leveraging the efficiencies of our framework, and perform high-fidelity interactive visualization by integrating it with an OSPRay-based viewer. Overall, our experiments demonstrate that using a trained DNN model to predict new particle trajectories requires a low memory footprint and results in rapid inference. Following best practices for large 3D datasets, our deep learning approach using GPUs for inference is shown to require approximately 46 times less memory while being more than 400 times faster than the conventional methods.

2023

T. M. Athawale, C.R. Johnson, S. Sane,, D. Pugmire.

“Fiber Uncertainty Visualization for Bivariate Data With Parametric and Nonparametric Noise Models,” In IEEE Transactions on Visualization and Computer Graphics, Vol. 29, No. 1, IEEE, pp. 613-23. 2023.

Visualization and analysis of multivariate data and their uncertainty are top research challenges in data visualization. Constructing fiber surfaces is a popular technique for multivariate data visualization that generalizes the idea of level-set visualization for univariate data to multivariate data. In this paper, we present a statistical framework to quantify positional probabilities of fibers extracted from uncertain bivariate fields. Specifically, we extend the state-of-the-art Gaussian models of uncertainty for bivariate data to other parametric distributions (e.g., uniform and Epanechnikov) and more general nonparametric probability distributions (e.g., histograms and kernel density estimation) and derive corresponding spatial probabilities of fibers. In our proposed framework, we leverage Green’s theorem for closed-form computation of fiber probabilities when bivariate data are assumed to have independent parametric and nonparametric noise. Additionally, we present a nonparametric approach combined with numerical integration to study the positional probability of fibers when bivariate data are assumed to have correlated noise. For uncertainty analysis, we visualize the derived probability volumes for fibers via volume rendering and extracting level sets based on probability thresholds. We present the utility of our proposed techniques via experiments on synthetic and simulation datasets

C. R. Johnson, H. Shen.

“AI for Scientific Visualization,” In Artificial Intelligence for Science, Edited by Alok Choudhary, Geoffrey Fox, and Tony Hey, World Scientific, pp. 535-552. 2023.

DOI: 10.1142/9789811265679_0029

C. Peters, T. Patel, W. Usher, C R. Johnson.

“Ray Tracing Spherical Harmonics Glyphs,” In Vision, Modeling, and Visualization, The Eurographics Association, 2023.

DOI: 10.2312/vmv.20231223

Spherical harmonics glyphs are an established way to visualize high angular resolution diffusion imaging data. Starting from a unit sphere, each point on the surface is scaled according to the value of a linear combination of spherical harmonics basis functions. The resulting glyph visualizes an orientation distribution function. We present an efficient method to render these glyphs using ray tracing. Our method constructs a polynomial whose roots correspond to ray-glyph intersections. This polynomial has degree 2k + 2 for spherical harmonics bands 0, 2, . . . , k. We then find all intersections in an efficient and numerically stable fashion through polynomial root finding. Our formulation also gives rise to a simple formula for normal vectors of the glyph. Additionally, we compute a nearly exact axis-aligned bounding box to make ray tracing of these glyphs even more efficient. Since our method finds all intersections for arbitrary rays, it lets us perform sophisticated shading and uncertainty visualization. Compared to prior work, it is faster, more flexible and more accurate.

Z. Wang, T. M. Athawale, K. Moreland, J. Chen, C. R. Johnson, D. Pugmire.

“FunMC2: A Filter for Uncertainty Visualization of Marching Cubes on Multi-Core Devices,” In Eurographics Symposium on Parallel Graphics and Visualization, 2023.

DOI: 10.2312/pgv.20231081

Visualization is an important tool for scientists to extract understanding from complex scientific data. Scientists need to understand the uncertainty inherent in all scientific data in order to interpret the data correctly. Uncertainty visualization has been an active and growing area of research to address this challenge. Algorithms for uncertainty visualization can be expensive, and research efforts have been focused mainly on structured grid types. Further, support for uncertainty visualization in production tools is limited. In this paper, we adapt an algorithm for computing key metrics for visualizing uncertainty in Marching Cubes (MC) to multi-core devices and present the design, implementation, and evaluation for a Filter for uncertainty visualization of Marching Cubes on Multi-Core devices (FunMC2). FunMC2 accelerates the uncertainty visualization of MC significantly, and it is portable across multi-core CPUs and GPUs. Evaluation results show that FunMC2 based on OpenMP runs around 11× to 41× faster on multi-core CPUs than the corresponding serial version using one CPU core. FunMC2 based on a single GPU is around 5× to 9× faster than FunMC2 running by OpenMP. Moreover, FunMC2 is flexible enough to process ensemble data with both structured and unstructured mesh types. Furthermore, we demonstrate that FunMC2 can be seamlessly integrated as a plugin into ParaView, a production visualization tool for post-processing.

2022

T. M. Athawale, D. Maljovec. L. Yan, C. R. Johnson, V. Pascucci, B. Wang.

“Uncertainty Visualization of 2D Morse Complex Ensembles Using Statistical Summary Maps,” In IEEE Transactions on Visualization and Computer Graphics, Vol. 28, No. 4, pp. 1955-1966. April, 2022.

ISSN: 1077-2626

DOI: 10.1109/TVCG.2020.3022359

Morse complexes are gradient-based topological descriptors with close connections to Morse theory. They are widely applicable in scientific visualization as they serve as important abstractions for gaining insights into the topology of scalar fields. Data uncertainty inherent to scalar fields due to randomness in their acquisition and processing, however, limits our understanding of Morse complexes as structural abstractions. We, therefore, explore uncertainty visualization of an ensemble of 2D Morse complexes that arises from scalar fields coupled with data uncertainty. We propose several statistical summary maps as new entities for quantifying structural variations and visualizing positional uncertainties of Morse complexes in ensembles. Specifically, we introduce three types of statistical summary maps – the probabilistic map , the significance map , and the survival map – to characterize the uncertain behaviors of gradient flows. We demonstrate the utility of our proposed approach using wind, flow, and ocean eddy simulation datasets.

W. Bangerth, C. R. Johnson, D. K. Njeru, B. van Bloemen Waanders.

“Estimating and using information in inverse problems,” Subtitled “arXiv:2208.09095,” 2022.

For inverse problems one attempts to infer spatially variable functions from indirect measurements of a system. To practitioners of inverse problems, the concept of ``information'' is familiar when discussing key questions such as which parts of the function can be inferred accurately and which cannot. For example, it is generally understood that we can identify system parameters accurately only close to detectors, or along ray paths between sources and detectors, because we have ``the most information'' for these places.

Although referenced in many publications, the ``information'' that is invoked in such contexts is not a well understood and clearly defined quantity. Herein, we present a definition of information density that is based on the variance of coefficients as derived from a Bayesian reformulation of the inverse problem. We then discuss three areas in which this information density can be useful in practical algorithms for the solution of inverse problems, and illustrate the usefulness in one of these areas -- how to choose the discretization mesh for the function to be reconstructed -- using numerical experiments.

M. Han, S. Sane, C. R. Johnson.

“Exploratory Lagrangian-Based Particle Tracing Using Deep Learning,” In Journal of Flow Visualization and Image Processing, Begell, 2022.

DOI: 10.1615/JFlowVisImageProc.2022041197

Time-varying vector fields produced by computational fluid dynamics simulations are often prohibitively large and pose challenges for accurate interactive analysis and exploration. To address these challenges, reduced Lagrangian representations have been increasingly researched as a means to improve scientific time-varying vector field exploration capabilities. This paper presents a novel deep neural network-based particle tracing method to explore time-varying vector fields represented by Lagrangian flow maps. In our workflow, in situ processing is first utilized to extract Lagrangian flow maps, and deep neural networks then use the extracted data to learn flow field behavior. Using a trained model to predict new particle trajectories offers a fixed small memory footprint and fast inference. To demonstrate and evaluate the proposed method, we perform an in-depth study of performance using a well-known analytical data set, the Double Gyre. Our study considers two flow map extraction strategies, the impact of the number of training samples and integration durations on efficacy, evaluates multiple sampling options for training and testing, and informs hyperparameter settings. Overall, we find our method requires a fixed memory footprint of 10.5 MB to encode a Lagrangian representation of a time-varying vector field while maintaining accuracy. For post hoc analysis, loading the trained model costs only two seconds, significantly reducing the burden of I/O when reading data for visualization. Moreover, our parallel implementation can infer one hundred locations for each of two thousand new pathlines in 1.3 seconds using one NVIDIA Titan RTX GPU.

M. Han, T.M. Athawale, D. Pugmire, C.R. Johnson.

“Accelerated Probabilistic Marching Cubes by Deep Learning for Time-Varying Scalar Ensembles,” In 2022 IEEE Visualization and Visual Analytics (VIS), IEEE, pp. 155-159. 2022.

DOI: 10.1109/VIS54862.2022.00040

Visualizing the uncertainty of ensemble simulations is challenging due to the large size and multivariate and temporal features of en-semble data sets. One popular approach to studying the uncertainty of ensembles is analyzing the positional uncertainty of the level sets. Probabilistic marching cubes is a technique that performs Monte Carlo sampling of multivariate Gaussian noise distributions for positional uncertainty visualization of level sets. However, the technique suffers from high computational time, making interactive visualization and analysis impossible to achieve. This paper introduces a deep-learning-based approach to learning the level-set uncertainty for two-dimensional ensemble data with a multivariate Gaussian noise assumption. We train the model using the first few time steps from time-varying ensemble data in our workflow. We demonstrate that our trained model accurately infers uncertainty in level sets for new time steps and is up to 170X faster than that of the original probabilistic model with serial computation and 10X faster than that of the original parallel computation.

D. K. Njeru, T. M. Athawale, J. J. France, C. R. Johnson.

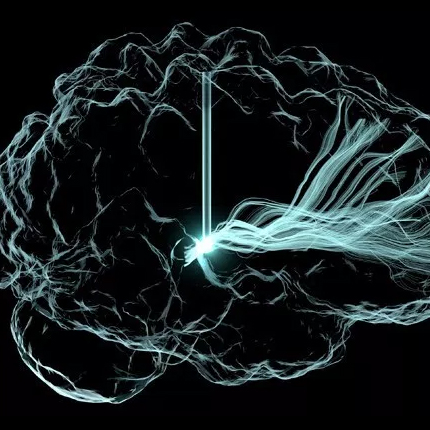

“Quantifying and Visualizing Uncertainty for Source Localisation in Electrocardiographic Imaging,” In Computer Methods in Biomechanics and Biomedical Engineering: Imaging & Visualization, Taylor & Francis, pp. 1--11. 2022.

DOI: 10.1080/21681163.2022.2113824

Electrocardiographic imaging (ECGI) presents a clinical opportunity to noninvasively understand the sources of arrhythmias for individual patients. To help increase the effectiveness of ECGI, we provide new ways to visualise associated measurement and modelling errors. In this paper, we study source localisation uncertainty in two steps: First, we perform Monte Carlo simulations of a simple inverse ECGI source localisation model with error sampling to understand the variations in ECGI solutions. Second, we present multiple visualisation techniques, including confidence maps, level-sets, and topology-based visualisations, to better understand uncertainty in source localization. Our approach offers a new way to study uncertainty in the ECGI pipeline.

S. Sane, C. R. Johnson, H. Childs.

“Demonstrating the viability of Lagrangian in situ reduction on supercomputers,” In Journal of Computational Science, Vol. 61, Elsevier, 2022.

Performing exploratory analysis and visualization of large-scale time-varying computational science applications is challenging due to inaccuracies that arise from under-resolved data. In recent years, Lagrangian representations of the vector field computed using in situ processing are being increasingly researched and have emerged as a potential solution to enable exploration. However, prior works have offered limited estimates of the encumbrance on the simulation code as they consider “theoretical” in situ environments. Further, the effectiveness of this approach varies based on the nature of the vector field, benefitting from an in-depth investigation for each application area. With this study, an extended version of Sane et al. (2021), we contribute an evaluation of Lagrangian analysis viability and efficacy for simulation codes executing at scale on a supercomputer. We investigated previously unexplored cosmology and seismology applications as well as conducted a performance benchmarking study by using a hydrodynamics mini-application targeting exascale computing. To inform encumbrance, we integrated in situ infrastructure with simulation codes, and evaluated Lagrangian in situ reduction in representative homogeneous and heterogeneous HPC environments. To inform post hoc accuracy, we conducted a statistical analysis across a range of spatiotemporal configurations as well as a qualitative evaluation. Additionally, our study contributes cost estimates for distributed-memory post hoc reconstruction. In all, we demonstrate viability for each application — data reduction to less than 1% of the total data via Lagrangian representations, while maintaining accurate reconstruction and requiring under 10% of total execution time in over 90% of our experiments.

L. Zhou, M. Fan, C. Hansen, C. R. Johnson, D. Weiskopf.

“A Review of Three-Dimensional Medical Image Visualization,” In Health Data Science, Vol. 2022, 2022.

DOI: https://doi.org/10.34133/2022/9840519

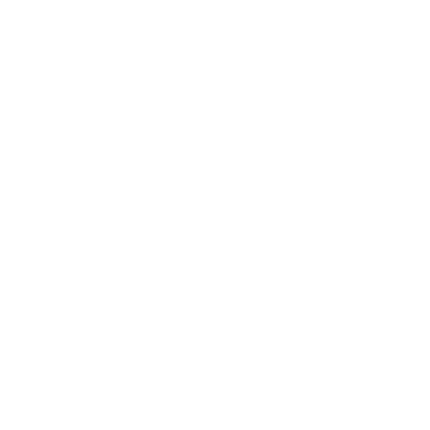

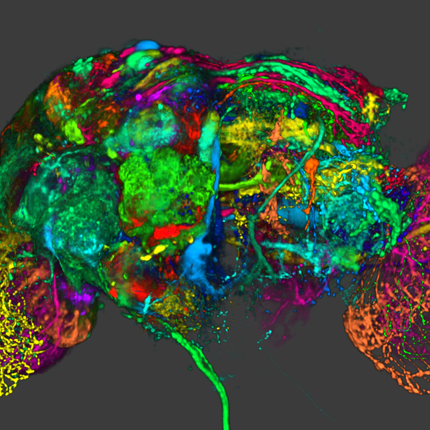

Importance. Medical images are essential for modern medicine and an important research subject in visualization. However, medical experts are often not aware of the many advanced three-dimensional (3D) medical image visualization techniques that could increase their capabilities in data analysis and assist the decision-making process for specific medical problems. Our paper provides a review of 3D visualization techniques for medical images, intending to bridge the gap between medical experts and visualization researchers. Highlights. Fundamental visualization techniques are revisited for various medical imaging modalities, from computational tomography to diffusion tensor imaging, featuring techniques that enhance spatial perception, which is critical for medical practices. The state-of-the-art of medical visualization is reviewed based on a procedure-oriented classification of medical problems for studies of individuals and populations. This paper summarizes free software tools for different modalities of medical images designed for various purposes, including visualization, analysis, and segmentation, and it provides respective Internet links. Conclusions. Visualization techniques are a useful tool for medical experts to tackle specific medical problems in their daily work. Our review provides a quick reference to such techniques given the medical problem and modalities of associated medical images. We summarize fundamental techniques and readily available visualization tools to help medical experts to better understand and utilize medical imaging data. This paper could contribute to the joint effort of the medical and visualization communities to advance precision medicine.

2021

T. M. Athawale, B. J. Stanislawski, S. Sane,, C. R. Johnson.

“Visualizing Interactions Between Solar Photovoltaic Farms and the Atmospheric Boundary Layer,” In Twelfth ACM International Conference on Future Energy Systems, pp. 377--381. 2021.

The efficiency of solar panels depends on the operating temperature. As the panel temperature rises, efficiency drops. Thus, the solar energy community aims to understand the factors that influence the operating temperature, which include wind speed, wind direction, turbulence, ambient temperature, mounting configuration, and solar cell material. We use high-resolution numerical simulations to model the flow and thermal behavior of idealized solar farms. Because these simulations model such complex behavior, advanced visualization techniques are needed to investigate and understand the results. Here, we present advanced 3D visualizations of numerical simulation results to illustrate the flow and heat transport in an idealized solar farm. The findings can be used to understand how flow behavior influences module temperatures, and vice versa.

T. M. Athawale, S. Sane, C. R. Johnson.

“Uncertainty Visualization of the Marching Squares and Marching Cubes Topology Cases,” Subtitled “arXiv:2108.03066,” 2021.

Marching squares (MS) and marching cubes (MC) are widely used algorithms for level-set visualization of scientific data. In this paper, we address the challenge of uncertainty visualization of the topology cases of the MS and MC algorithms for uncertain scalar field data sampled on a uniform grid. The visualization of the MS and MC topology cases for uncertain data is challenging due to their exponential nature and the possibility of multiple topology cases per cell of a grid. We propose the topology case count and entropy-based techniques for quantifying uncertainty in the topology cases of the MS and MC algorithms when noise in data is modeled with probability distributions. We demonstrate the applicability of our techniques for independent and correlated uncertainty assumptions. We visualize the quantified topological uncertainty via color mapping proportional to uncertainty, as well as with interactive probability queries in the MS case and entropy isosurfaces in the MC case. We demonstrate the utility of our uncertainty quantification framework in identifying the isovalues exhibiting relatively high topological uncertainty. We illustrate the effectiveness of our techniques via results on synthetic, simulation, and hixel datasets.

T. M. Athawale, B. Ma, E. Sakhaee, C. R. Johnson,, A. Entezari.

“Direct Volume Rendering with Nonparametric Models of Uncertainty,” In IEEE Transactions on Visualization and Computer Graphics, Vol. 27, No. 2, pp. 1797-1807. 2021.

DOI: 10.1109/TVCG.2020.3030394

We present a nonparametric statistical framework for the quantification, analysis, and propagation of data uncertainty in direct volume rendering (DVR). The state-of-the-art statistical DVR framework allows for preserving the transfer function (TF) of the ground truth function when visualizing uncertain data; however, the existing framework is restricted to parametric models of uncertainty. In this paper, we address the limitations of the existing DVR framework by extending the DVR framework for nonparametric distributions. We exploit the quantile interpolation technique to derive probability distributions representing uncertainty in viewing-ray sample intensities in closed form, which allows for accurate and efficient computation. We evaluate our proposed nonparametric statistical models through qualitative and quantitative comparisons with the mean-field and parametric statistical models, such as uniform and Gaussian, as well as Gaussian mixtures. In addition, we present an extension of the state-of-the-art rendering parametric framework to 2D TFs for improved DVR classifications. We show the applicability of our uncertainty quantification framework to ensemble, downsampled, and bivariate versions of scalar field datasets.

C. R. Johnson.

“Translational computer science at the scientific computing and imaging institute,” In Journal of Computational Science, Vol. 52, pp. 101217. 2021.

ISSN: 1877-7503

DOI: https://doi.org/10.1016/j.jocs.2020.101217

The Scientific Computing and Imaging (SCI) Institute at the University of Utah evolved from the SCI research group, started in 1994 by Professors Chris Johnson and Rob MacLeod. Over time, research centers funded by the National Institutes of Health, Department of Energy, and State of Utah significantly spurred growth, and SCI became a permanent interdisciplinary research institute in 2000. The SCI Institute is now home to more than 150 faculty, students, and staff. The history of the SCI Institute is underpinned by a culture of multidisciplinary, collaborative research, which led to its emergence as an internationally recognized leader in the development and use of visualization, scientific computing, and image analysis research to solve important problems in a broad range of domains in biomedicine, science, and engineering. A particular hallmark of SCI Institute research is the creation of open source software systems, including the SCIRun scientific problem-solving environment, Seg3D, ImageVis3D, Uintah, ViSUS, Nektar++, VisTrails, FluoRender, and FEBio. At this point, the SCI Institute has made more than 50 software packages broadly available to the scientific community under open-source licensing and supports them through web pages, documentation, and user groups. While the vast majority of academic research software is written and maintained by graduate students, the SCI Institute employs several professional software developers to help create, maintain, and document robust, tested, well-engineered open source software. The story of how and why we worked, and often struggled, to make professional software engineers an integral part of an academic research institute is crucial to the larger story of the SCI Institute’s success in translational computer science (TCS).

N. Marshak, P. Grosset, A. Knoll, J. P. Ahrens, C. R. Johnson.

“Evaluation of GPU Volume Rendering in PyTorch Using Data-Parallel Primitives,” In Eurographics Symposium on Parallel Graphics and Visualization (EGPGV), 2021.

Data-parallel programming (DPP) has attracted considerable interest from the visualization community, fostering major software initiatives such as VTK-m. However, there has been relatively little recent investigation of data-parallel APIs in higherlevel languages such as Python, which could help developers sidestep the need for low-level application programming in C++ and CUDA. Moreover, machine learning frameworks exposing data-parallel primitives, such as PyTorch and TensorFlow, have exploded in popularity, making them attractive platforms for parallel visualization and data analysis. In this work, we benchmark data-parallel primitives in PyTorch, and investigate its application to GPU volume rendering using two distinct DPP formulations: a parallel scan and reduce over the entire volume, and repeated application of data-parallel operators to an array of rays. We find that most relevant DPP primitives exhibit performance similar to a native CUDA library. However, our volume rendering implementation reveals that PyTorch is limited in expressiveness when compared to other DPP APIs. Furthermore, while render times are sufficient for an early ''proof of concept'', memory usage acutely limits scalability.

P. Rosen, A. Seth, E. Mills, A. Ginsburg, J. Kamenetzky, J. Kern, C.R. Johnson, B. Wang.

“Using Contour Trees in the Analysis and Visualization of Radio Astronomy Data Cubes,” In Topological Methods in Data Analysis and Visualization VI, Springer-Verlag, pp. 87--108. 2021.

The current generation of radio and millimeter telescopes, particularly the Atacama Large Millimeter Array (ALMA), offers enormous advances in observing capabilities. While these advances represent an unprecedented opportunity to facilitate scientific understanding, the increased complexity in the spatial and spectral structure of these ALMA data cubes lead to challenges in their interpretation. In this paper, we perform a feasibility study for applying topological data analysis and visualization techniques never before tested by the ALMA community. Using techniques based on contour trees, we seek to improve upon existing analysis and visualization workflows of ALMA data cubes, in terms of accuracy and speed in feature extraction. We review our development process in building effective analysis and visualization capabilities for the astrophysicists. We also summarize effective design practices by identifying domain-specific needs of simplicity, integrability, and reproducibility, in order to best target and service the large astrophysics community.

S. Sane, T. Athawale,, C.R. Johnson.

“Visualization of Uncertain Multivariate Data via Feature Confidence Level-Sets,” In EuroVis 2021, 2021.

Recent advancements in multivariate data visualization have opened new research opportunities for the visualization community. In this paper, we propose an uncertain multivariate data visualization technique called feature confidence level-sets. Conceptually, feature level-sets refer to level-sets of multivariate data. Our proposed technique extends the existing idea of univariate confidence isosurfaces to multivariate feature level-sets. Feature confidence level-sets are computed by considering the trait for a specific feature, a confidence interval, and the distribution of data at each grid point in the domain. Using uncertain multivariate data sets, we demonstrate the utility of the technique to visualize regions with uncertainty in relation to the specific trait or feature, and the ability of the technique to provide secondary feature structure visualization based on uncertainty.

S. Sane, A. Yenpure, R. Bujack, M. Larsen, K. Moreland, C. Garth, C. R. Johnson,, H. Childs.

“Scalable In Situ Computation of Lagrangian Representations via Local Flow Maps,” In Eurographics Symposium on Parallel Graphics and Visualization, The Eurographics Association, 2021.

DOI: 10.2312/pgv.20211040

In situ computation of Lagrangian flow maps to enable post hoc time-varying vector field analysis has recently become an active area of research. However, the current literature is largely limited to theoretical settings and lacks a solution to address scalability of the technique in distributed memory. To improve scalability, we propose and evaluate the benefits and limitations of a simple, yet novel, performance optimization. Our proposed optimization is a communication-free model resulting in local Lagrangian flow maps, requiring no message passing or synchronization between processes, intrinsically improving scalability, and thereby reducing overall execution time and alleviating the encumbrance placed on simulation codes from communication overheads. To evaluate our approach, we computed Lagrangian flow maps for four time-varying simulation vector fields and investigated how execution time and reconstruction accuracy are impacted by the number of GPUs per compute node, the total number of compute nodes, particles per rank, and storage intervals. Our study consisted of experiments computing Lagrangian flow maps with up to 67M particle trajectories over 500 cycles and used as many as 2048 GPUs across 512 compute nodes. In all, our study contributes an evaluation of a communication-free model as well as a scalability study of computing distributed Lagrangian flow maps at scale using in situ infrastructure on a modern supercomputer.