SCI is excited to announce six new projects that have been funded this past month. We look forward to sharing the research efforts from our amazing faculty and students.

EAGER: Exploring intelligent services for managing uncertainty under constraints across the Computing Continuum: A case study using the SAGE platform

Daniel Balouek-Thomert (NSF)

Advanced cyberinfrastructures seek to make streaming data a modality for the scientific community by offering rich sensing capabilities across the edge-to-Cloud Computing Continuum. For societal applications based on multiple geo-distributed data sources and sophisticated data analytics, such as wildfire detection or personal safety, these platforms can be incredibly transformative. However, it is challenging to match user constraints (response time, quality, energy) with what is feasible in a heterogeneous and dynamic computation environment coupled with uncertainty in the availability of data. Identifying events and accelerating responses critically depends on the capacity to select resources and configure services under uncertain operating conditions. This project develops software abstractions that react at runtime to unforeseen events, and adaptation of the resources and computing paths between the edge and the cloud. This research is driven by the unique capabilities of the SAGE cyberinfrastructure as a case study to explore data-driven reactive behaviors at the network's edge on a national scale.

The project proposes intelligent services to drive computing optimization from urgent scenarios on large cyberinfrastructures. The project focuses on resource management and programming support around three interrelated tasks: (1) developing models for capturing the dynamics of edge resources; (2) researching software abstractions for reactive analytics; and (3) addressing cost/benefit tradeoffs to drive the autonomous reconfiguration of applications and resources under constraints. The outcomes are intended to directly extend the SAGE platform and provide artifacts for creating urgent analytics, which will benefit SAGE users and the scientific community.

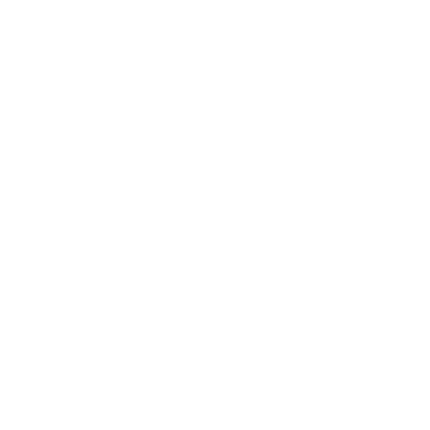

Geometry and Topology for Interpretable and Reliable Deep Learning in Medical Imaging

Bei Wang (NSF)

The goal of this project is to develop methods for making machine learning models interpretable and reliable, and thus bridge the trust gap to make machine learning translatable to the clinic. This project achieves this goal through investigation of the mathematical foundations -- specifically the geometry and topology -- of DNNs. Based on these mathematical foundations, this project will develop computational tools that will improve the interpretability and reliability of DNNs. The methods developed in this project will be broadly applicable wherever deep learning is used, including health care, security, computer vision, natural language processing, etc.

Implicit Continuous Representations for Visualization of Complex Data

Bei Wang (DOE)

The proposed research investigates how to accurately and reliably visualize complex data consisting of multiple nonuniform domains and/or data types. Much of the problem stems from having no uniform representation of disparate datasets. To analyze such multimodal data, users face many choices in converting one modality to another; confounding processing and visualization, even for experts. Recent work in alternative data models and representations that are continuous, high-order (nonlinear), and can be queried anywhere (i.e., implicit), suggests that such models can potentially represent multiple data sources in a consistent way. We will investigate models that are high-order, continuous, differentiable, potentially anti-differentiable (providing integrals in addition to derivatives), and can be evaluated anywhere in a continuous domain for scientific data analysis and visualization.

Algorithms, Theory, and Validation of Deep Graph Learning with Limited Supervision: A Continuous Perspective

Bao Wang (NSF)

Graph-structured data is ubiquitous in scientific and artificial intelligence applications, for instance, particle physics, computational chemistry, drug discovery, neural science, recommender systems, robotics, social networks, and knowledge graphs. Graph neural networks (GNNs) have achieved tremendous success in a broad class of graph learning tasks, including graph node classification, graph edge prediction, and graph generation. Nevertheless, there are several bottlenecks of GNNs: 1) In contrast to many deep networks such as convolutional neural networks, it has been noticed that increasing the depth of GNNs results in a severe accuracy degradation, which has been interpreted as over-smoothing in the machine learning community. 2) The performance of GNNs relies heavily on a sufficient number of labeled graph nodes; the prediction of GNNs will become significantly less reliable when less labeled data is available. This research aims to address these challenges by developing new mathematical understanding of GNNs and theoretically-principled algorithms for graph deep learning with less training data. The project will train graduate students and postdoctoral associates through involvement in the research. The project will also integrate the research into teaching to advance data science education.

This project aims to develop next-generation continuous-depth GNNs leveraging computational mathematics tools and insights and to advance data-driven scientific simulation using the new GNNs. This project has three interconnected thrusts that revolve around pushing the envelope of theory and practice in graph deep learning with limited supervision using PDE and harmonic analysis tools: 1) developing a new generation of diffusion-based GNNs that are certifiable to learning with deep architectures and less training data; 2) developing a new efficient attention-based approach for learning graph structures from the underlying data accompanied by uncertainty quantification; and 3) application validation in learning-assisted scientific simulation and multi-modal learning and software development.

Using Behavioral Nudges in Peer Review to Improve Critical Analysis in STEM Courses

Paul Rosen (NSF)

This project aims to serve the national interest by increasing the quality of peer reviews given by students. Peer reviews, in which students have the opportunity to analyze and evaluate projects made by their classroom peers, are a widely acknowledged pedagogical method for engaging students and have become a standard practice in undergraduate education. Peer review is most often used in classes with large number of students to provide timely feedback on student assignments. However, peer review has benefits far beyond scalability. Peer review gathers diverse feedback, raises students’ comfort level with having their work evaluated in a professional setting, and most importantly, the action of giving a peer review is often more valuable than receiving a peer review. The software platforms in current use that support peer review have seen limited innovation in recent decades, and potential improvements to enhance student outcomes have not been thoroughly evaluated. This project plans to develop and study an innovative peer review system that uses behavioral nudges, a method of subtlety reinforcing positive habits, to improve the evaluation skills of students and the quality of the feedback they provide in peer reviews.

This project proposes to 1) create a software system supporting peer review that includes behavioral nudges for guiding the peer review process to hone students’ evaluation and critical analysis skills and 2) study how the use of behavioral nudges improves student engagement. The approach will be evaluated using four different visualization courses that serve approximately 800 students per year. Two groups of students will be studied. The control group will utilize peer review with additional feedback modalities but no nudges, while the test group will use peer review with the same feedback modalities but also include nudges. Common statistical tests such as, ANOVA, t-tests, and correlations, will be used to validate the results. The resulting system could provide a significant and measurable improvement in outcomes in courses that utilize peer review across different STEM disciplines. The resulting technologies will be disseminated as open source to enable widespread adoption. The NSF IUSE: EHR Program supports research and development projects to improve the effectiveness of STEM education for all students. Through the Engaged Student Learning track, the program supports the creation, exploration, and implementation of promising practices and tools.

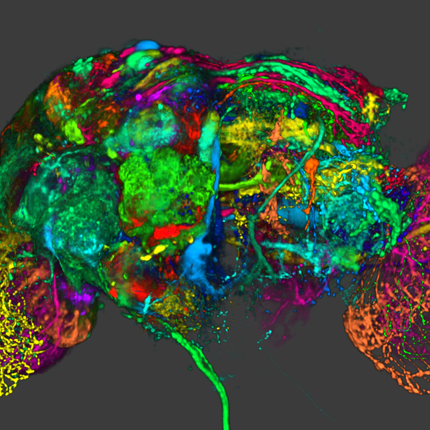

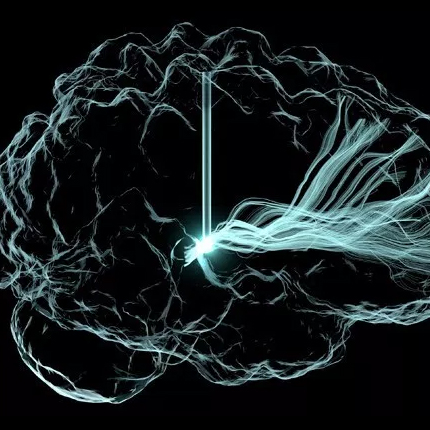

A physics-informed machine learning approach to dynamic blood flow analysis from static subtraction computed tomographic angiography imaging

Amir Arzani (NSF)

Recent investigations have shown that interactions of blood flow with blood vessel walls plays an important role in the progression of cardiovascular diseases. Accurately quantifying blood flow or hemodynamic interactions could lead to methods for patient-specific therapies that result in better treatments and reduced mortality. In this project, the researchers will develop techniques to non-invasively inferring the complex, dynamic hemodynamic behavior using a commonly used medical imaging modality that is typically used to produce static anatomical images for analyzing blood vessel structure. In this project, the researchers propose to develop a novel physics-informed model of the blood flow using a deep-learning based processing method. This will allow the researchers infer dynamic time-resolved three-dimensional blood velocity and relative pressure field. The results will be used to accurately compute relevant hemodynamic factors. This project will train a cohort of graduate students in the latest data-driven deep learning techniques in engineering. It will engage undergraduate students in research through well-established programs at UW Milwaukee and Northern Arizona University. Outreach to high school students, particularly those belonging to under-represented communities will be accomplished through summer programs at UW Milwaukee.

The goal of this project is accurate image-based hemodynamic analysis using commonly available images. Contrast concentration, three-dimensional blood velocity, and relative pressure will be modeled as deep neural nets. Training the neural nets will involve a loss function that matches actual data from time-stamped sCTA sinograms with predicted sinograms generated using line integrals computed from forward evaluation of the neural net used to model the contrast concentration. Additionally, blood flow and contrast advection-diffusion physics will be used as constraints in the solution process. System noise will be handled through a Bayesian formulation of the deep learning algorithm. The neural net formulation will allow high resolution sampling of the blood velocity and relative pressure fields and accurate computation of velocity-derive hemodynamic parameters using automatic differentiation. The methods will be validated using numerical and in vitro flow experiments using particle image velocimetry. By enabling the estimation of hemodynamic data from what, until now, has been considered to be static data, the proposed research maximizes inference that can be derived from sCTA imaging data without the need for additional computed tomography hardware or new scan protocols.