VAPLS 2013 Workshop on Visualization and Analysis of Performance on Large-scale Software

Co-located with IEEE VIS 2013

|

|

|

Workshop Information

Date: Monday October 14Time: 8:30 am - 12:15 pm

Location: A 601+602

Workshop Overview

| The hardware complexity of HPC systems has increased in parallel with the complexity of modern simulation and scale-bridging applications. Consequently, writing efficient software for these large- scale systems has become increasingly difficult. Understanding the interactions of hardware and software and their impacts on scalability in the presence of large numbers of compute cores is essential for optimizing HPC systems. However, in many cases it is simply too difficult to comprehend performance characteristics. The purpose of this workshop is to cross-pollinate the expertise of specialists in performance analysis and visualization. By facilitating the beginning of significant collaborations between these groups we hope to connect those already working in the development of performance tools with those working in the visualization of software performance at all scales. |

Organizers

| Peer-Timo Bremer Lawrence Livermore National Laboratory |

Joshua A. Levine Clemson University |

|

| Paul Rosen University of Utah |

Martin Schulz Lawrence Livermore National Laboratory |

Schedule

| First Session | ||

| 10 min | Joshua A. Levine Clemson University |

Welcome |

| 20 min | Katherine Isaacs UC Davis |

Introduction to Performance Analysis Concepts, Definitions, Challenges, Types of Data |

| 15 min | Paul Rosen University of Utah |

Working with Memory Visualizing data covering memory allocation and access patterns in memory |

| 15 min | Joshua A. Levine Clemson University |

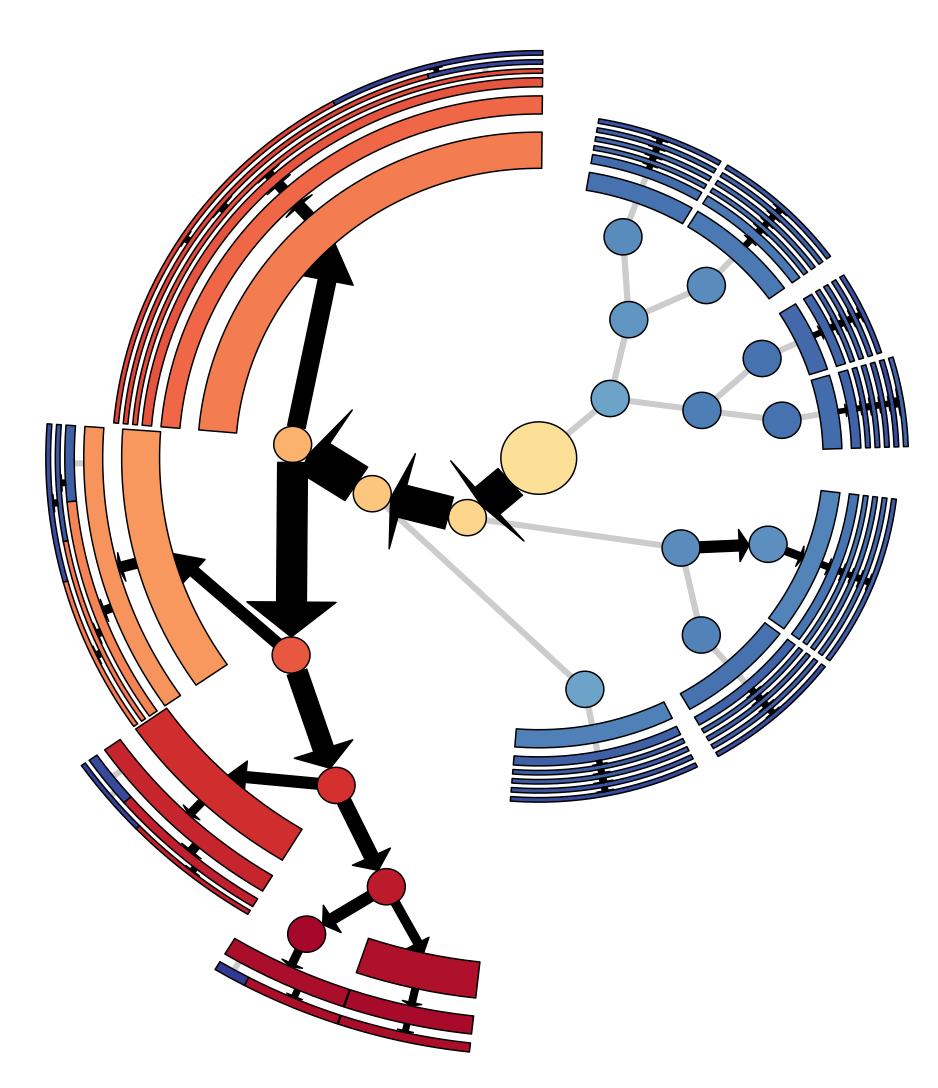

Working with Networks Visualizing data acquired by network measurements |

| 15 min | Alfredo Gimenez UC Davis |

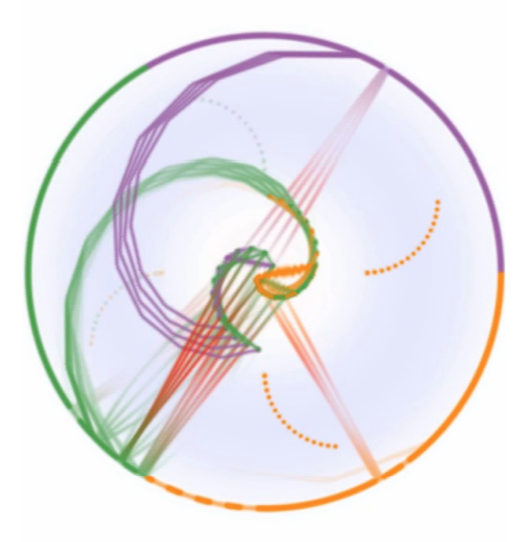

Working in the Application Domain Visualizing data by displaying it in the domain of scientific data |

| 30 min | Panel Discussion - Moderator: Josh Levine | Participants: Paul Rosen, Katherine Isaacs, Alfredo Gimenez | |

| 15 min | Break |

| Second Session | ||

| 30 min | Jonathan Lifflander University of Illinois Urbana-Champaign |

Projections: Scalable Performance Analysis and Visualization |

| 30 min | Richard Vuduc Georgia Tech |

Performance Analysis on HPC Systems |

| 45 min | Panel Discussion - Moderator: Josh Levine | Participants: Jonathan Lifflander, Richard Vuduc | |

Talk Abstracts

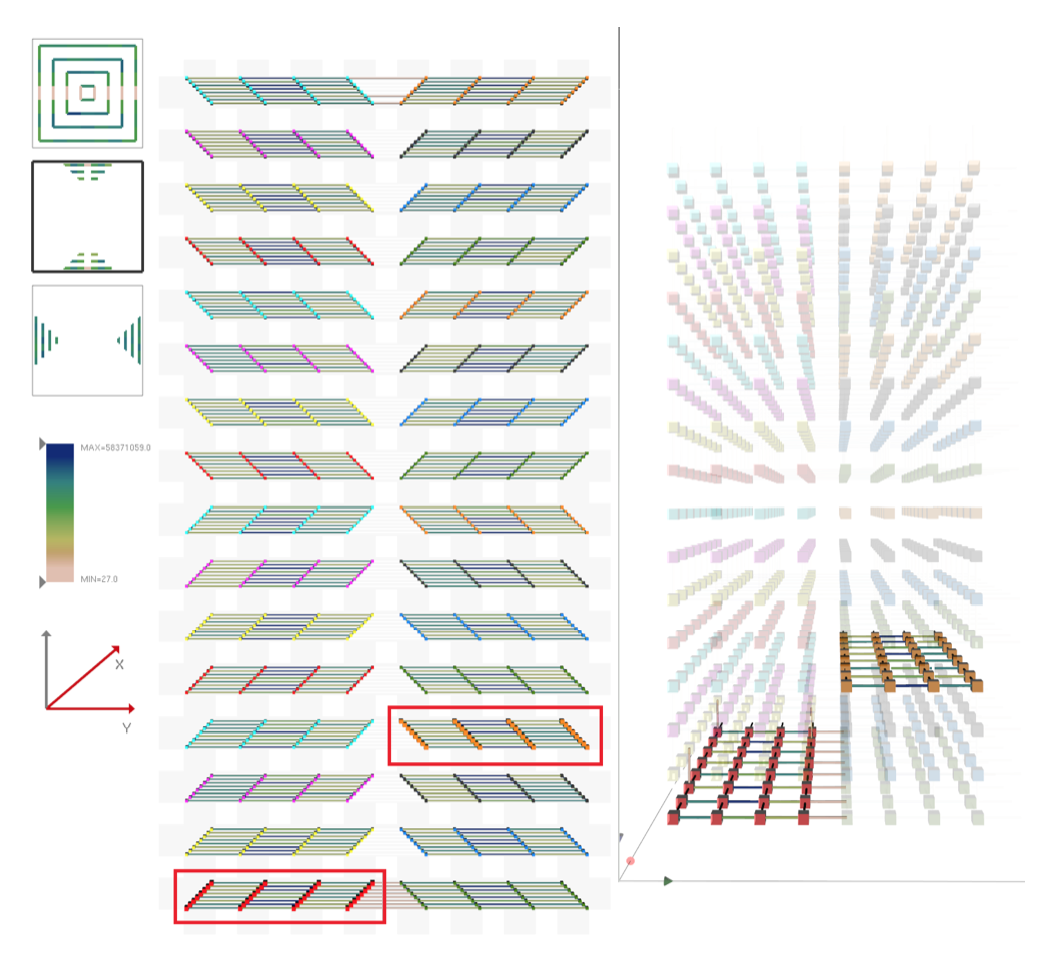

| Jonathan Lifflander University of Illinois Urbana-Champaign Projections: Scalable Performance Analysis and Visualization Projections is a performance visualization tool co-developed with the Charm++ parallel programming system. Performance of a message-driven, load-balanced system such as Charm++ becomes complicated to analyze. However, the same message-driven runtime can be leveraged to automatically instrument important events at very low cost. Based on such instrumentation, Projections provides tools for extensive post-mortem analysis. A distinguishing feature of Projections is the comprehensive set of views for analyzing performance that leads to insights about factors impacting performance. These include a rich timeline view and an outlier analysis tool. It also supports a novel, highly-scalable, live visualization tool for analyzing performance characteristics of running parallel program. Richard Vuduc Georgia Tech This talk offers a broad overview of the process of engineering fast, scalable code. It highlights what kind of data a performance engineering collects about a code artifact and how this data is used to guide performance tuning. Its aim will be to stimulate a conversation between performance engineers and "visualizers" on opportunities for cross-disciplinary tools and research. (Whether it achieves this goal is, of course, up in the air, but it cannot hurt too much to try.) |

Participants

|

Jonathan Lifflander Jonathan Lifflander is a fifth year PhD candidate at UIUC, advised by Laxmikant Kale. Currently, he is working on developing novel, low-cost algorithms for tracing, replaying, and visualizing parallel applications that are executed with specific scheduling strategies such as work stealing, which leads to many fine grain events. In 2013, he won the George Michael High Performance Computing award for research excellence and academic progress. |

|

Richard Vuduc Georgia Tech Richard (Rich) Vuduc is an Associate Professor at the Georgia Institute of Technology ("Georgia Tech"), in the School of Computational Science and Engineering (CSE). His research lab, the HPC Garage (hpcgarage.org), is interested in all-things-high-performance-computing, with an emphasis on parallel algorithms, performance analysis, and performance tuning. He is a member of the DARPA Computer Science Study Panel, recipient of the NSF CAREER Award, and co-recipient of the Gordon Bell Prize (2010). For his teaching activities, he received a Lockheed Martin Excellence in Teaching Award (2013). His lab's research has received a number of best paper nominations and awards, including most recently the 2012 Best Paper Award from the SIAM Conference on Data Mining. At Georgia Tech, he currently serves as the Associate Chair for Academic Affairs in CSE and as the Director of Graduate Programs in CSE. |

|

David Richards Lawrence Livermore National Laboratory David Richards received a B.S. in Physics from Harvey Mudd College in 1992 and a Ph.D. in Physics from the University of Illinois at Urbana-Champaign in 1999. He has over 15 years of experience in scientific computing as both a user and application developer in academic, industrial, and national lab settings. David joined Lawrence Livermore National Laboratory in 2006 where he is a member of the Computational Materials Science Group in the Condensed Matter and Materials Division. David is currently the LLNL project leader for a joint IBM/LLNL project to developing high performance cardiac modeling tools for Blue Gene/Q and is the proxy application task lead for the ExMatEx Co-Design Center. In 2007 he was a member of team that won the IEEE/ACM Gordon Bell Award and was named a finalist for that award in 2009 and 2012. |

|

Peer-Timo Bremer Lawrence Livermore National Laboratory Peer-Timo received his PhD in 2004 from the University of California, Davis and currently holds a shared appointment as computer scientist at the Lawrence Livermore National Laboratory and as the Associate Director for Research for the Center of Extreme Data Management Analysis and Visualization and the Scientific Computing and Imaging Institute at the University of Utah. Peer-Timo is a member of the PAVE team, a project at LLNL aimed to combine performance analysis, large scale data analysis, and visualization. Peer-Timo has organized the workshop on Foundations on Topological Analysis at IEEE Visualization 2011, the tutorial on topology at IEEE Visualization 2010, and the TopoInVis 2013 conference held at Davis. Furthermore, Peer-Timo is an organizer for the Dagstuhl Perspectives Workshop 14022 on Connecting Performance Analysis and Visualization to Advance Extreme Scale Computing. |

|

Joshua A. Levine Clemson University Josh has a PhD in computer science from The Ohio State University and completed a postdoc at the University of Utah's Scientific Computing and Imaging Institute. Currently, he is an assistant professor at Clemson University's Visual Computing division in the School of Computing. His research interests include geometric modeling, visualization, mesh generation, medical imaging, and computational topology. Josh has co-organized a series of three workshops, MeshMed 2011 - 2013 collocated with MICCAI, for the purpose of bringing together the two communities of mesh generation and medical imaging. With organizers Bremer and Schulz, he is part of the PAVE team, a project at LLNL aimed to combine performance analysis, large scale data analysis, and visualization. |

|

Todd Gamblin Lawrence Livermore National Laboratory Todd is a computer scientist in the Center for Applied Scientific Computing at Lawrence Livermore National Laboratory. His research focuses on scalable algorithms for measuring, analyzing, and visualizing performance data from massively parallel applications. He is also interested in fault tolerance, resilience, MPI, and parallel programming models. Todd is the team leader for the Performance Analysis and Visualization at Exascale (PAVE) project. He also works on numerous DOE ASCR, SciDAC, and ASC projects. Todd received his Ph.D. and M.S. degrees in Computer Science from the University of North Carolina at Chapel Hill in 2009 and 2005. He received his B.A. in Computer Science and Japanese from Williams College in 2002. He has been at LLNL since 2008. |

|

Paul Rosen University of Utah Paul received his PhD in 2010 from Purdue University. He has been a Research Assistant Professor at the Scientific Computing and Imaging Institute at the University of Utah since 2010. Paul is the coauthor of 30 peer-reviewed publications, a number of which include memory performance visualization for both single-threaded CPUs and multi-threaded GPUs. |

|

Martin Schulz Lawrence Livermore National Laboratory Martin is a Computer Scientist at the Center for Applied Scientific Computing (CASC) at Lawrence Livermore National Laboratory (LLNL). He earned his Doctorate in Computer Science in 2001 from the Technische Universitat Munchen (Munich, Germany) and also holds a Master of Science in Computer Science from the University of Illinois at Urbana Champaign. He has published over 140 peer-reviewed papers and has given over 20 tutorials on performance tools and related topics. Martin functioned as the general chair for PACT (Parallel Architectures and Compilation Techniques) 2009 and SCI Europe 2001, organized a range of international workshops incl. HIPS 2000, 2003 and 2008, and has served on the organizing committees of numerous international conferences. Martin is also an organizer for the Dagstuhl Perspectives Workshop 14022 on Connecting Performance Analysis and Visualization to Advance Extreme Scale Computing. Further, Martin is a member of the PAVE team, a project at LLNL aimed to combine performance analysis, large scale data analysis, and visualization. His research interests include parallel and distributed architectures and applications; performance monitoring, modeling and analysis; tool support for parallel programming; and power efficiency for parallel systems. |