Visualization

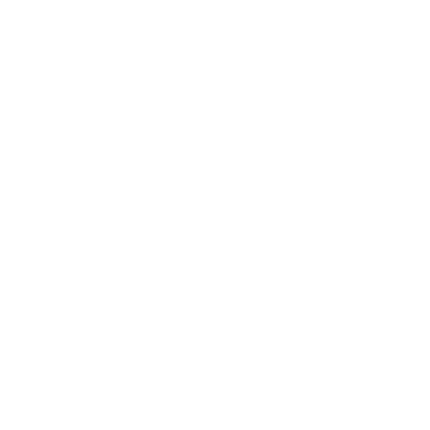

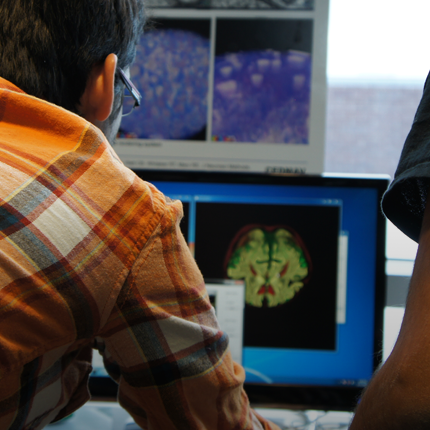

Visualization, sometimes referred to as visual data analysis, uses the graphical representation of data as a means of gaining understanding and insight into the data. Visualization research at SCI has focused on applications spanning computational fluid dynamics, medical imaging and analysis, biomedical data analysis, healthcare data analysis, weather data analysis, poetry, network and graph analysis, financial data analysis, etc.Research involves novel algorithm and technique development to building tools and systems that assist in the comprehension of massive amounts of (scientific) data. We also research the process of creating successful visualizations.

We strongly believe in the role of interactivity in visual data analysis. Therefore, much of our research is concerned with creating visualizations that are intuitive to interact with and also render at interactive rates.

Visualization at SCI includes the academic subfields of Scientific Visualization, Information Visualization and Visual Analytics.

Mike Kirby

Uncertainty Visualization

Alex Lex

Information VisualizationCenters and Labs:

- Visualization Design Lab (VDL)

- CEDMAV

- POWDER Display Wall

- Modeling, Display, and Understanding Uncertainty in Simulations for Policy Decision Making

- Topological Data Analysis for Large Network Visualization

Funded Research Projects:

Publications in Visualization:

Visual Exploratory Analysis for Designing Large-Scale Network-on-Chip Architectures: A Domain Expert-Led Design Study, S. Wang, H. Yan, K.E. Isaacs, Y. Sun. In IEEE Transactions on Visualization and Computer Graphics, Vol. 30, pp. 1970-1983. 2024. Visualization design studies bring together visualization researchers and domain experts to address yet unsolved data analysis challenges stemming from the needs of the domain experts. Typically, the visualization researchers lead the design study process and implementation of any visualization solutions. This setup leverages the visualization researchers' knowledge of methodology, design, and programming, but the availability to synchronize with the domain experts can hamper the design process. We consider an alternative setup where the domain experts take the lead in the design study, supported by the visualization experts. In this study, the domain experts are computer architecture experts who simulate and analyze novel computer chip designs. These chips rely on a Network-on-Chip (NOC) to connect components. The experts want to understand how the chip designs perform and what in the design led to their performance. To aid this analysis, we develop Vis4Mesh, a visualization system that provides spatial, temporal, and architectural context to simulated NOC behavior. Integration with an existing computer architecture visualization tool enables architects to perform deep-dives into specific architecture component behavior. We validate Vis4Mesh through a case study and a user study with computer architecture researchers. We reflect on our design and process, discussing advantages, disadvantages, and guidance for engaging in a domain expert-led design studies. |

Design Concerns for Integrated Scripting and Interactive Visualization in Notebook Environments C. Scully-Allison, I. Lumsden, K. Williams, J. Bartels, M. Taufer, S. Brink, A. Bhatele, O. Pearce, K. Isaacs. In IEEE Transactions on Visualization and Computer Graphics, IEEE, 2024. DOI: 10.1109/TVCG.2024.3354561 Interactive visualization can support fluid exploration but is often limited to predetermined tasks. Scripting can support a vast range of queries but may be more cumbersome for free-form exploration. Embedding interactive visualization in scripting environments, such as computational notebooks, provides an opportunity to leverage the strengths of both direct manipulation and scripting. We investigate interactive visualization design methodology, choices, and strategies under this paradigm through a design study of calling context trees used in performance analysis, a field which exemplifies typical exploratory data analysis workflows with Big Data and hard to define problems. We first produce a formal task analysis assigning tasks to graphical or scripting contexts based on their specificity, frequency, and suitability. We then design a notebook-embedded interactive visualization and validate it with intended users. In a follow-up study, we present participants with multiple graphical and scripting interaction modes to elicit feedback about notebook-embedded visualization design, finding consensus in support of the interaction model. We report and reflect on observations regarding the process and design implications for combining visualization and scripting in notebooks. |

Bimodal Visualization of Industrial X-Ray and Neutron Computed Tomography Data, X. Huang, H. Miao, A. Townsend, K. Champley, J. Tringe, V. Pascucci, P.T. Bremer. In IEEE Transactions on Visualization and Computer Graphics, IEEE, 2024. DOI: 10.1109/TVCG.2024.3382607 Advanced manufacturing creates increasingly complex objects with material compositions that are often difficult to characterize by a single modality. Our collaborating domain scientists are going beyond traditional methods by employing both X-ray and neutron computed tomography to obtain complementary representations expected to better resolve material boundaries. However, the use of two modalities creates its own challenges for visualization, requiring either complex adjustments of bimodal transfer functions or the need for multiple views. Together with experts in nondestructive evaluation, we designed a novel interactive bimodal visualization approach to create a combined view of the co-registered X-ray and neutron acquisitions of industrial objects. Using an automatic topological segmentation of the bivariate histogram of X-ray and neutron values as a starting point, the system provides a simple yet effective interface to easily create, explore, and adjust a bimodal visualization. We propose a widget with simple brushing interactions that enables the user to quickly correct the segmented histogram results. Our semiautomated system enables domain experts to intuitively explore large bimodal datasets without the need for either advanced segmentation algorithms or knowledge of visualization techniques. We demonstrate our approach using synthetic examples, industrial phantom objects created to stress bimodal scanning techniques, and real-world objects, and we discuss expert feedback. |

A Comparative Study of the Perceptual Sensitivity of Topological Visualizations to Feature Variations T. M. Athawale, B. Triana, T. Kotha, D. Pugmire, P. Rosen. In IEEE Transactions on Visualization and Computer Graphics, Vol. 30, No. 1, pp. 1074-1084. Jan, 2024. DOI: 10.1109/TVCG.2023.3326592 Color maps are a commonly used visualization technique in which data are mapped to optical properties, e.g., color or opacity. Color maps, however, do not explicitly convey structures (e.g., positions and scale of features) within data. Topology-based visualizations reveal and explicitly communicate structures underlying data. Although we have a good understanding of what types of features are captured by topological visualizations, our understanding of people’s perception of those features is not. This paper evaluates the sensitivity of topology-based isocontour, Reeb graph, and persistence diagram visualizations compared to a reference color map visualization for synthetically generated scalar fields on 2-manifold triangular meshes embedded in 3D. In particular, we built and ran a human-subject study that evaluated the perception of data features characterized by Gaussian signals and measured how effectively each visualization technique portrays variations of data features arising from the position and amplitude variation of a mixture of Gaussians. For positional feature variations, the results showed that only the Reeb graph visualization had high sensitivity. For amplitude feature variations, persistence diagrams and color maps demonstrated the highest sensitivity, whereas isocontours showed only weak sensitivity. These results take an important step toward understanding which topology-based tools are best for various data and task scenarios and their effectiveness in conveying topological variations as compared to conventional color mapping. |

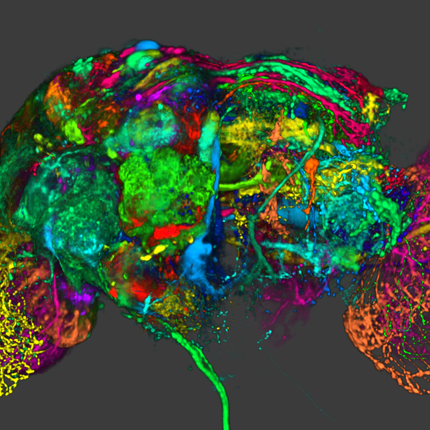

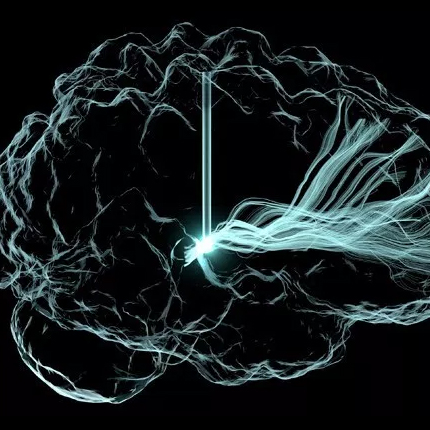

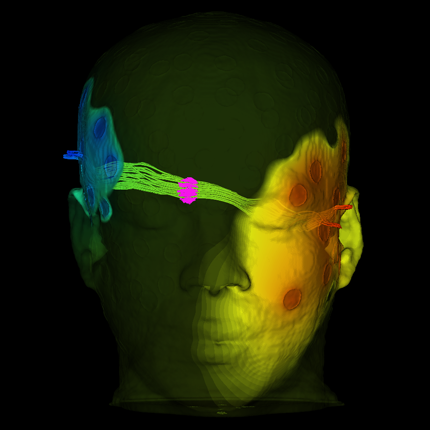

Grand Challenges at the Interface of Engineering and Medicine S. Subramaniam, M. Miller, several co-authors, Chris R. Johnson, et al.. In IEEE Open Journal of Engineering in Medicine and Biology, Vol. 5, IEEE, pp. 1--13. 2024. DOI: 10.1109/OJEMB.2024.3351717 Over the past two decades Biomedical Engineering has emerged as a major discipline that bridges societal needs of human health care with the development of novel technologies. Every medical institution is now equipped at varying degrees of sophistication with the ability to monitor human health in both non-invasive and invasive modes. The multiple scales at which human physiology can be interrogated provide a profound perspective on health and disease. We are at the nexus of creating “avatars” (herein defined as an extension of “digital twins”) of human patho/physiology to serve as paradigms for interrogation and potential intervention. Motivated by the emergence of these new capabilities, the IEEE Engineering in Medicine and Biology Society, the Departments of Biomedical Engineering at Johns Hopkins University and Bioengineering at University of California at San Diego sponsored an interdisciplinary workshop to define the grand challenges that face biomedical engineering and the mechanisms to address these challenges. The Workshop identified five grand challenges with cross-cutting themes and provided a roadmap for new technologies, identified new training needs, and defined the types of interdisciplinary teams needed for addressing these challenges. The themes presented in this paper include: 1) accumedicine through creation of avatars of cells, tissues, organs and whole human; 2) development of smart and responsive devices for human function augmentation; 3) exocortical technologies to understand brain function and treat neuropathologies; 4) the development of approaches to harness the human immune system for health and wellness; and 5) new strategies to engineer genomes and cells. |

Interactive Visualization and Portable Image Blending of Massive Aerial Image Mosaics, S. Petruzza, B. Summa, A. Gooch, C.M. Laney, T. Goulden, J. Schreiner, S. Callahan, V. Pascucci. In IEEE International Conference on Big Data, IEEE, pp. 3365-3370. 2023. Processing, managing and publishing the substantial volume of data collected through modern remote sensing technologies in a format that is easy for researchers - across broad skill levels and scientific domains - to view and use presents a formidable challenge. As a prime example, the massive scale of image mosaics produced by NEON’s Airborne Observation Platform (AOP), often several to hundreds of gigabytes in volume, demands efficient data management strategies. Additionally, these aerial mosaics frequently exhibit seams due to variations in lighting conditions during the data acquisition process. These seams undermine the integrity of subsequent scientific analyses, introducing distortions that hinder accurate interpretation of ecological patterns. Finally, one of NEON’s core objectives is to make these data broadly accessible to users, including those who are not yet versed in working with remote sensing data or who wish to view the datasets without needing to download and process them.In response to these challenges, we have developed a comprehensive data management pipeline that enables interactive access for analysis and visualization of NEON’s aerial mosaic collection. This pipeline automates data ingestion, conversion, and publication in a streamable format, facilitating seamless user interaction through web viewers and programming APIs. Moreover, we have implemented a portable blending algorithm aimed at eliminating these problematic seams from large aerial mosaics. This algorithm, grounded in the Conjugate Gradient (CG) method, has been implemented both in CUDA and using the modern SYCL programming model for enhanced portability across diverse computing platforms.Experimental results demonstrate scalable performance across both CPU and GPU architectures. This work not only addresses the challenges of large aerial data management and seam removal but also opens avenues for more accurate and comprehensive scientific investigations within the NEON ecosystem. |

Attribute-Aware RBFs: Interactive Visualization of Time Series Particle Volumes Using RT Core Range Queries N. Morrical, S. Zellmann, A. Sahistan, P. Shriwise, V. Pascucci. In IEEE Trans Vis Comput Graph, IEEE, 2023. DOI: 10.1109/TVCG.2023.3327366 Supplemental material |

Ray Tracing Spherical Harmonics Glyphs C. Peters, T. Patel, W. Usher, C R. Johnson. In Vision, Modeling, and Visualization, The Eurographics Association, 2023. DOI: 10.2312/vmv.20231223 Spherical harmonics glyphs are an established way to visualize high angular resolution diffusion imaging data. Starting from a unit sphere, each point on the surface is scaled according to the value of a linear combination of spherical harmonics basis functions. The resulting glyph visualizes an orientation distribution function. We present an efficient method to render these glyphs using ray tracing. Our method constructs a polynomial whose roots correspond to ray-glyph intersections. This polynomial has degree 2k + 2 for spherical harmonics bands 0, 2, . . . , k. We then find all intersections in an efficient and numerically stable fashion through polynomial root finding. Our formulation also gives rise to a simple formula for normal vectors of the glyph. Additionally, we compute a nearly exact axis-aligned bounding box to make ray tracing of these glyphs even more efficient. Since our method finds all intersections for arbitrary rays, it lets us perform sophisticated shading and uncertainty visualization. Compared to prior work, it is faster, more flexible and more accurate. |