News

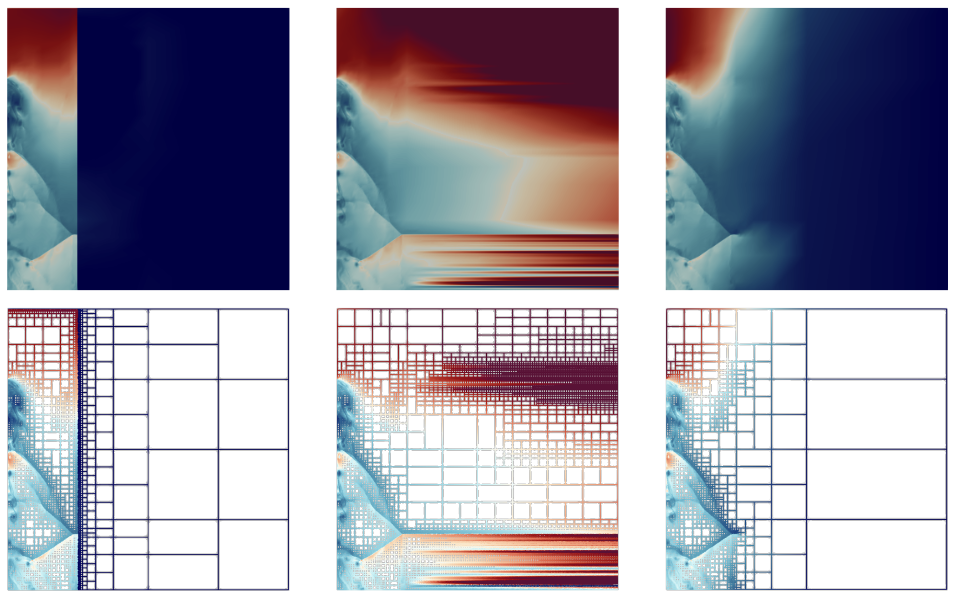

Best Paper Awarded at IEEE Pacific Visualization 2022

Congratulations to Harsh Bhatia (LLNL/SCI Alumnus), Duong Hoang (SCI), Nathan Morrical (SCI), Peter Lindstrom (LLNl), Peer-Timo Bremer (LLNL/SCI), and Valerio Pascucci (SCI) for receiving the Best Paper Award at the IEEE Pacific Visualization 2022 Conference for their paper:

Congratulations to Harsh Bhatia (LLNL/SCI Alumnus), Duong Hoang (SCI), Nathan Morrical (SCI), Peter Lindstrom (LLNl), Peer-Timo Bremer (LLNL/SCI), and Valerio Pascucci (SCI) for receiving the Best Paper Award at the IEEE Pacific Visualization 2022 Conference for their paper:AMM: Adaptive Multilinear Meshes

Anna Busatto and Lindsay Rupp Accepted to Simula Summer School in Computational Physiology

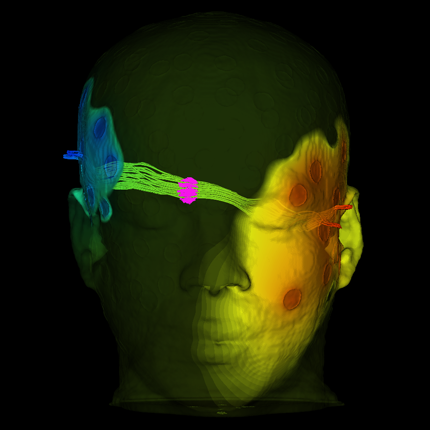

Anna Busatto and Lindsay Rupp, PhD students in the Biomedical Engineering Department and the SCI Institute, who study with Dr. Rob MacLeod and the CEG group, were two of the thirty accepted participants for the Simula Summer School in Computational Physiology 2022. This summer-long program is a unique opportunity for graduate students to participate in lectures and projects, first in Oslo, Norway and then in San Diego, CA. A big benefit is the chance to network with mentors and graduate students from around the world. By getting accepted to the summer school, Anna and Lindsay also receive travel support and accommodations for both components of the program.

Anna Busatto and Lindsay Rupp, PhD students in the Biomedical Engineering Department and the SCI Institute, who study with Dr. Rob MacLeod and the CEG group, were two of the thirty accepted participants for the Simula Summer School in Computational Physiology 2022. This summer-long program is a unique opportunity for graduate students to participate in lectures and projects, first in Oslo, Norway and then in San Diego, CA. A big benefit is the chance to network with mentors and graduate students from around the world. By getting accepted to the summer school, Anna and Lindsay also receive travel support and accommodations for both components of the program.

SCI alum Juliana Freire honored as an AAAS lifetime fellow

Congratulations to SCI alum Juliana Freire who has been honored as an AAAS lifetime fellow.

Congratulations to SCI alum Juliana Freire who has been honored as an AAAS lifetime fellow. In a tradition stretching back to 1874, the AAAS Council elects as Fellows a distinguished cadre of scientists, engineers, and innovators who have been recognized for their achievements across disciplines. The 2021 class of AAAS Fellows includes 564 scientists, engineers, and innovators spanning 24 disciplines.

U of U Health Professor and SCI Collaborator Receives Pew Charitable Trust Innovation Award

Gabrielle Kardon, Ph.D., a professor of Human Genetics at University of Utah Health, is a recipient of a 2021 Pew Charitable Trust Innovation Award. She is one of 12 accomplished scientists with expertise in molecular biology, genetics, immunology, neuroscience, or other key fields who were selected to investigate critical issues linked to human health.

Gabrielle Kardon, Ph.D., a professor of Human Genetics at University of Utah Health, is a recipient of a 2021 Pew Charitable Trust Innovation Award. She is one of 12 accomplished scientists with expertise in molecular biology, genetics, immunology, neuroscience, or other key fields who were selected to investigate critical issues linked to human health.

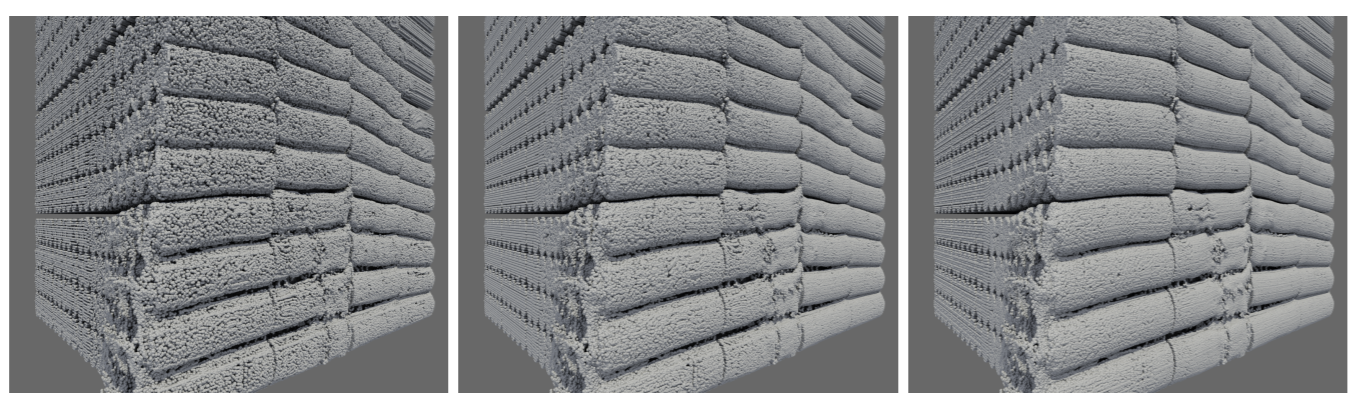

ShapeWorks 6.2 Now Available

To download installation packages for Windows/Mac/Linux and/or the source code, please visit https://github.com/SCIInstitute/ShapeWorks/releases/tag/v6.2.0

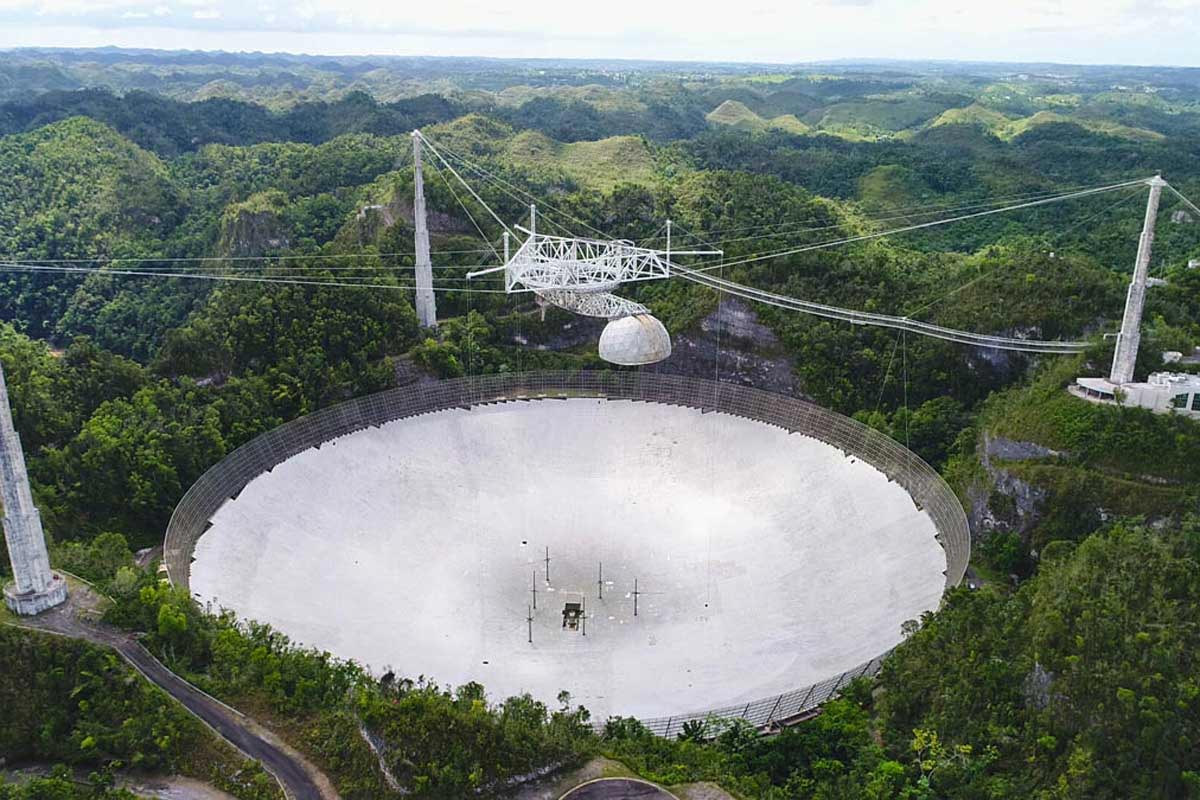

Moving Observatory Data Safely - HPC Wire Readers' Choice Award

In December of last year, the renowned Arecibo Observatory in Puerto Rico collapsed in spectacular fashion when the 900-ton equipment platform, suspended about 500 feet above the dish, came crashing down due to a support cable unraveling.

In December of last year, the renowned Arecibo Observatory in Puerto Rico collapsed in spectacular fashion when the 900-ton equipment platform, suspended about 500 feet above the dish, came crashing down due to a support cable unraveling.Fortunately, no one was hurt, but the question remained: What about the invaluable astronomical and atmospheric science data that was collected for decades by the observatory’s 1,000-foot-wide reflector dish? University of Utah Scientific Computing and Imaging (SCI) Institute and School of Computing professor Valerio Pascucci and U faculty members were part of a consortium of researchers responsible for retrieving that precious data and moving it to a safe location.

Bringing Fairness in AI to the Forefront of Education

Bei Wang Phillips, a Faculty at the SCI Institute and an Assistant Professor at the School of Computing, and Arul Mishra and Himanshu Mishra, both Professors of Marketing at the David Eccles School of Business applied for the competitive Deep Tech grant offered by the State of Utah’s Office of the Commissioner of Higher Education. They were awarded a 3-year grant of about $340,000 for developing courses/modules on AI ethics and fairness that would bring fair and equitable AI to the forefront of education.

Bei Wang Phillips, a Faculty at the SCI Institute and an Assistant Professor at the School of Computing, and Arul Mishra and Himanshu Mishra, both Professors of Marketing at the David Eccles School of Business applied for the competitive Deep Tech grant offered by the State of Utah’s Office of the Commissioner of Higher Education. They were awarded a 3-year grant of about $340,000 for developing courses/modules on AI ethics and fairness that would bring fair and equitable AI to the forefront of education.

LDAV 2021 Best Paper Honorable Mention

Congratulations to Duong Hoang, Harsh Bhatia, Peter Lindstrom, and Valerio Pascucci on receiving best paper honorable mention at the IEEE Symposium on Large Data Analysis and Visualization (LDAV) for their paper titled "High-quality and Low-memory-footprint Progressive Decoding of Large-scale Particle Data."

Congratulations to Duong Hoang, Harsh Bhatia, Peter Lindstrom, and Valerio Pascucci on receiving best paper honorable mention at the IEEE Symposium on Large Data Analysis and Visualization (LDAV) for their paper titled "High-quality and Low-memory-footprint Progressive Decoding of Large-scale Particle Data."

SCI at University of Utah Accelerates Visual Computing via oneAPI

oneAPI cross-architecture programming & Intel® oneAPI Rendering Toolkit to Improve Large-scale Simulations, Data Analytics & Visualization for Scientific Workflows

[Oct. 26, 2021] - The Scientific Computing and Imaging (SCI) Institute at the University of Utah is pleased to announce that it is expanding its Intel Graphics and Visualization Institute of Xellence (Intel GVI) to an Intel oneAPI Center of Excellence (CoE). The oneAPI Center of Excellence will focus on advancing research, development and teaching of the latest visual computing innovations in ray tracing and rendering, and using oneAPI to accelerate compute across heterogeneous architectures (CPUs, GPUs including future upcoming Intel Xe architecture, and other accelerators). Adopting oneAPI’s cross-architecture programming model provides a path to achieve maximum efficiency in multi-architecture deployments supporting CPUs + accelerators. This core approach based on open standards will allow fast, agile development and support new, advanced features without costly management of multiple vendors’ specific proprietary code bases.

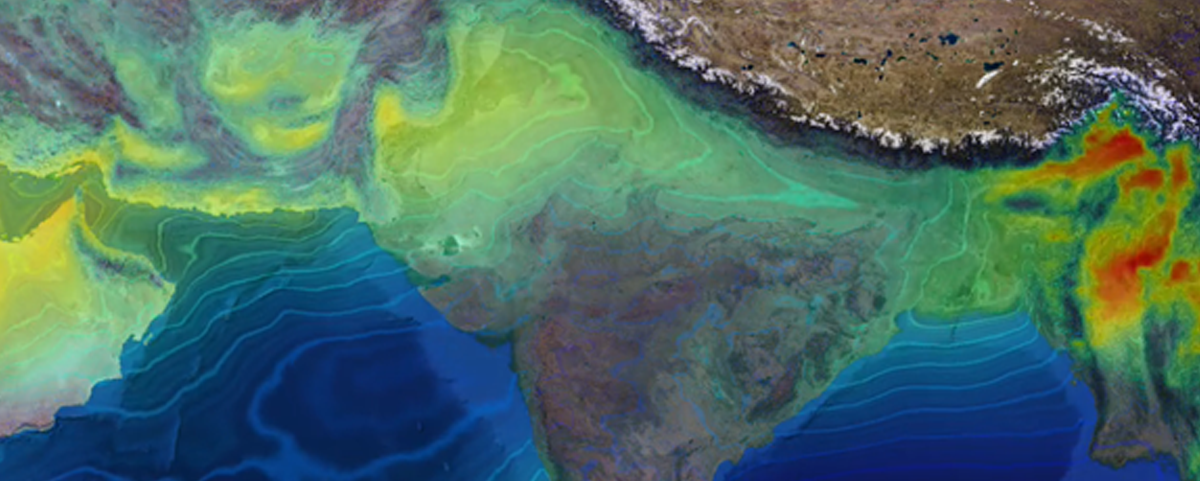

Democratizing Data Access

The University of Utah’s Scientific Computing and Imaging (SCI) Institute is leading a new initiative to democratize data access.

The University of Utah’s Scientific Computing and Imaging (SCI) Institute is leading a new initiative to democratize data access.The National Science Foundation (NSF) awarded a $5.6 million project to a team of researchers led by School of Computing professor Valerio Pascucci (pictured), who is also director of the Center for Extreme Data Management in the College of Engineering, to build the critical infrastructure needed to connect large-scale experimental and computational facilities and recruit others to data-driven sciences.

Chuck Hansen Elected to IEEE Board of Governors

Congratulations to Chuck Hansen on being elected to the IEEE Board of Governors for 2022. IEEE Computer Society relies on a fully elected Board of Governors (BOG) to drive its vision forward, provide policy guidance to program boards and committees, and review the performance of the organization to ensure compliance with its policy directions.

Congratulations to Chuck Hansen on being elected to the IEEE Board of Governors for 2022. IEEE Computer Society relies on a fully elected Board of Governors (BOG) to drive its vision forward, provide policy guidance to program boards and committees, and review the performance of the organization to ensure compliance with its policy directions.